New Applications Driving Higher Bandwidths – Part 4

TEF21 Panel Q&A – Part 4

Nathan Tracy, Ethernet Alliance Board Member and TE Connectivity

Brad Booth, Microsoft

Tad Hofmeister, Google

Rob Stone, Facebook

Addressing Emerging Network Challenges

According to Cisco’s Annual Internet Report (2018-2023), the number of devices and connections are growing faster than the world’s population. Compounded by  the meteoric rise of higher resolution video – expected to hit 66 percent by 2023 – along with surging M2M connections, Wi-Fi’s ongoing expansion, and increasing mobility among Internet users, the impact to the network is significant.

the meteoric rise of higher resolution video – expected to hit 66 percent by 2023 – along with surging M2M connections, Wi-Fi’s ongoing expansion, and increasing mobility among Internet users, the impact to the network is significant.

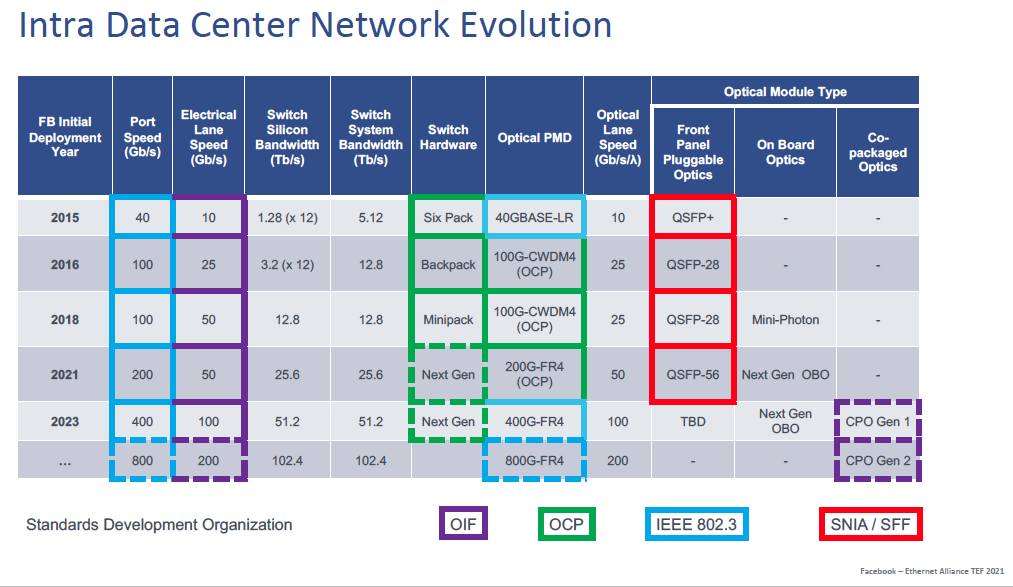

Day Two of TEF21: The Road Ahead conference focused on today’s explosive application space as a driver for higher speeds. In New Applications Driving Higher Bandwidths, moderator and Ethernet Alliance Board Member Nathan Tracy of TE Connectivity, and panelists Brad Booth of Microsoft (Paradigm shift in Network Topologies); Facebook ‘s Rob Stone (Co-packaged Optics for Datacenters); and Tad Hofmeister of Google (OIF considerations for beyond 400ZR) discussed how their organizations plan to address emerging network challenges wrought by today’s mounting bandwidth demands.

At the presentation’s conclusion, the audience engaged with panelists on numerous questions about technology developments needed to address escalating speed and bandwidth requirements. Their insightful responses are captured below in part four of a five-part series.

CPO Benefits & Related Standardization Efforts

Nathan Tracy: Is CPO more of a power reduction play? Or do you see some other benefits coming from CPO as well?

Rob Stone: It’s both. When you start to build wider optical chip sets things get cheaper. You get to amortize the cost of the packaging over all of the bandwidth in that chip set. So, it’s a cost play, it’s also a power play and then it’s also being able to build something that’s efficient which will enable some of these very dense accelerators to have the appropriate I/O. We’ve got to be able to shrink this stuff down and make it denser. So I think this satisfies many facets.

Brad Booth: That’s why this has to start now. Any time we’re asking to do this massive change in how we build and deploy these networks, there’s a lot associated with it relative to how you mix and match some of these systems, how you make sure there’s interoperability. What are the test points? How do you build these structures? What’s the difference in how you deploy? What does it mean for how your supply chain model works? This all has to be filtered out. We can’t just assume, oh, this is going to arrive on my doorstep five years from now and be ready to be deployed. It’s not. There’s a lot that we have to learn, and learn from, as we build these systems out.

Nathan Tracy: How achievable do we think the vision of multivendor is? How hard will it be to create that market? What’s it going to take for interoperability and multivendor?

Nathan Tracy: How achievable do we think the vision of multivendor is? How hard will it be to create that market? What’s it going to take for interoperability and multivendor?

Brad Booth: Any time we’ve seen multivendor interoperable solutions there’s usually been specifications or standards that people can reference and build to. That’s going to be part of it. And thankfully, guys like Tad and Rob and myself and others are involved in the standards bodies. We are active working with the OIF, COBO, whatever other organization is necessary to have this progress and have this development ensued. That’s a critical aspect of it. There’s a lot of people on the call today, a lot of industry experts across various organizations, that can bring some of that knowledge and influence to helping us create the specifications and potentially avoid some of the pitfalls. But I don’t expect what we have as a day one solution is necessarily going to be the same as what we have five years later. But we’ve got to start somewhere, and we’ve got to work with the industry and encourage them to start that work together. Even if there are proprietary solutions that come up, hopefully there’s a confluence that will bring everybody towards some standardized effort. Interestingly enough, this ties back a little bit into IEEE 802.3. I don’t want to just completely skip over that. That’s part of the paradigm even in IEEE 802.3, where we’ve kind of viewed the world as being pluggable optics and pluggable DAC and such. And it’s okay, if we start doing co-packaged optics. How does that change the implication for how we write those standards? There are a lot of implications that IEEE 802.3 is going to have to have to consider.

Rob Stone: I would agree with Brad in terms of the importance of the standards. I would also say that the embodiment of co-packaged optics that Microsoft and Facebook have published in the JDF, we’ve done with a mind to making standardized interfaces so that it can be an ecosystem. I would acknowledge that there may be more densely, more tightly coupled, more highly integrated approaches to do co-packaged optics, but it might be more difficult to standardize some of it. So we’ve tried to do something where the different, folks doing the optics, the folks doing the DSPs and the switching silicon all know kind of what they’re contractually responsible for based on our existing standards so that we feel like there’s something that’s practical.

Tad Hofmeister: I want to add that I think the standardizations efforts add value to the ecosystem even before there’s a commoditized spec because it’s helping align end user requirements. Even if somebody comes out with a proprietary solution presumably it’s there’s a larger market opportunity for them because there has been some alignment in defining what are the needs for this kind of technology. I think the earlier these standards efforts are, the better, even though it is an immature technology. I’m not optimistic that we’ll have a completely interchangeable plug and play in the next few years. This is going to be a long, multi-year effort and I think we should be happy with intermediate goals.

To access TEF21: New Applications Driving Higher Bandwidths on demand, and all of the TEF21 on-demand content, visit the Ethernet Alliance website.