New Applications Driving Higher Bandwidths

TEF21 Panel Q&A – Part 5

Nathan Tracy, Ethernet Alliance Board Member and TE Connectivity

Brad Booth, Microsoft

Tad Hofmeister, Google

Rob Stone, Facebook

Addressing Emerging Network Challenges

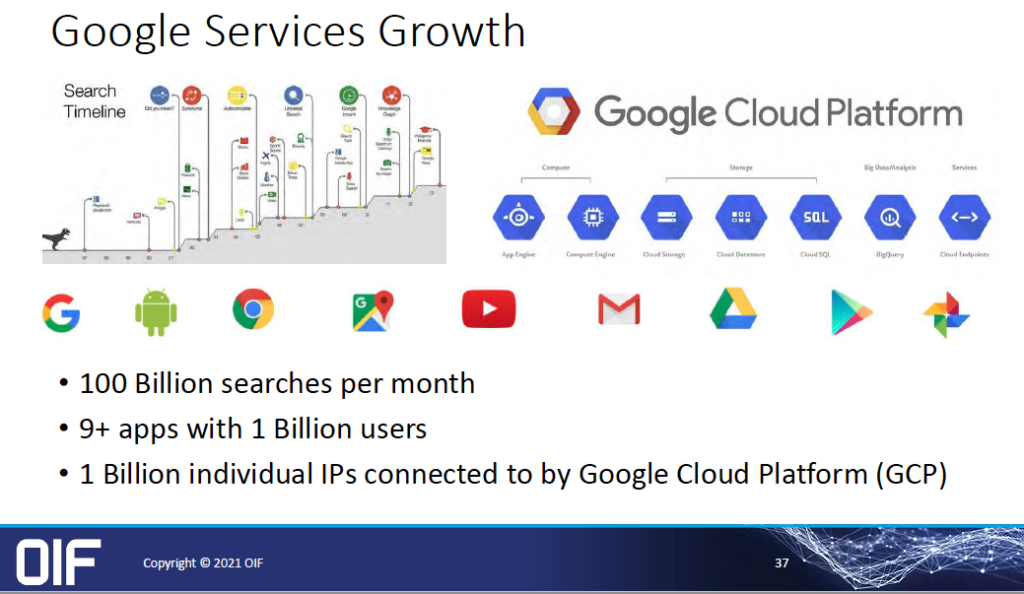

According to Cisco’s Annual Internet Report (2018-2023), the number of devices and connections are growing faster than the world’s population. Compounded by  the meteoric rise of higher resolution video – expected to hit 66 percent by 2023 – along with surging M2M connections, Wi-Fi’s ongoing expansion, and increasing mobility among Internet users, the impact to the network is significant.

the meteoric rise of higher resolution video – expected to hit 66 percent by 2023 – along with surging M2M connections, Wi-Fi’s ongoing expansion, and increasing mobility among Internet users, the impact to the network is significant.

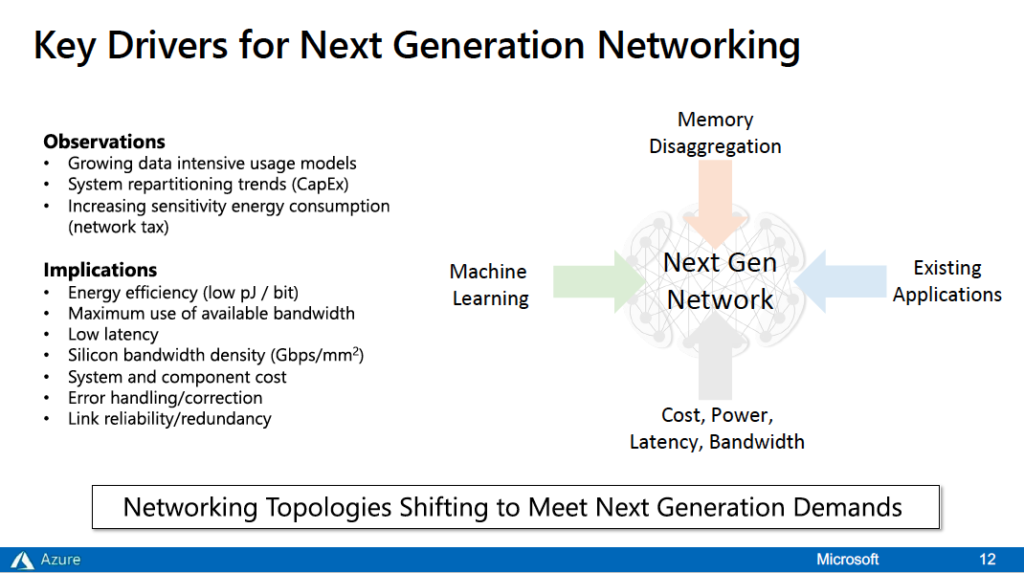

Day Two of TEF21: The Road Ahead conference focused on today’s explosive application space as a driver for higher speeds. In New Applications Driving Higher Bandwidths, moderator and Ethernet Alliance Board Member Nathan Tracy of TE Connectivity, and panelists Brad Booth of Microsoft (Paradigm shift in Network Topologies); Facebook ‘s Rob Stone (Co-packaged Optics for Datacenters); and Tad Hofmeister of Google (OIF considerations for beyond 400ZR) discussed how their organizations plan to address emerging network challenges wrought by today’s mounting bandwidth demands.

At the presentation’s conclusion, the audience engaged with panelists on numerous questions about technology developments needed to address escalating speed and bandwidth requirements. Their insightful responses are captured below in the last of this five-part series.

CPO Predictions

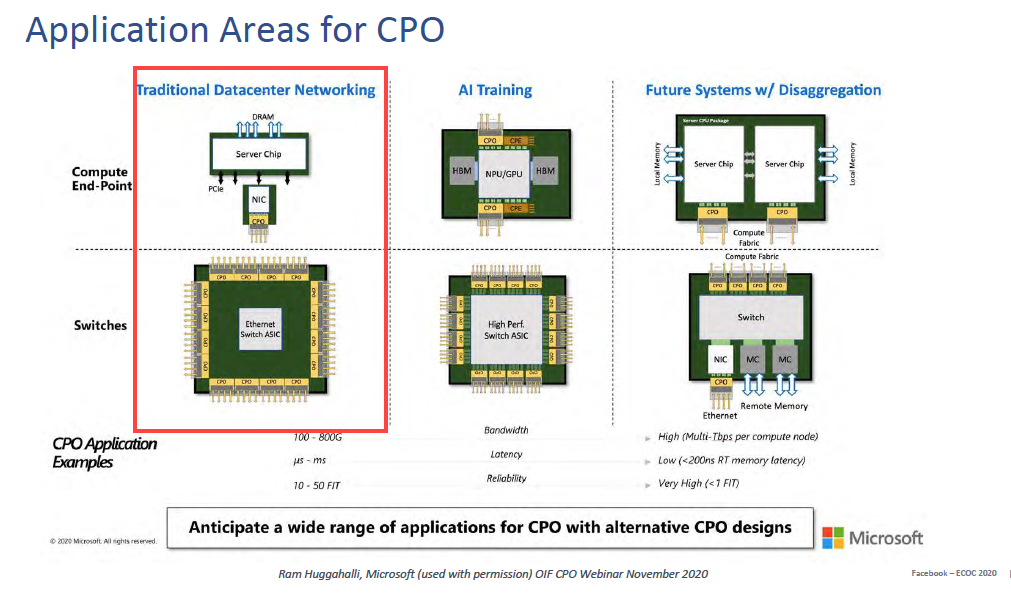

Nathan Tracy: Anyone want to be provocative and provide any estimate on what percentage of the market is CPO by 2025? How fast does it happen?

Brad Booth: By 2025, I think we could see as high as 50% of new equipment purchased in 2025 as “CPO-based.” Past 2025, I think the adoption, at least from my perception of looking at our deployments and our transitions that we’re looking at, will be a pretty consistent transition. The only question for us is on our metro links. Where we’re going to be using 400 ZR, do we continue to use faceplate pluggables? Do we look at an onboard optics system or what some people are calling near chip optics or whatever? Or are we going to go to some type of coherent embedded into a CPO type of environment? And I think unfortunately for coherent, the CPO aspect is a little more difficult and so it probably doesn’t make sense to build a system with half the ports CPO and the other half faceplate pluggable when the rest of your data center has already gone CPO. We’ve got to find some way to have these whole systems built in a contained environment where the optics are already pre-populated on these systems. Eventually past 2025, you won’t be buying it with open ports on it on those systems. You’ll be buying it fully populated ready to go.

Brad Booth: By 2025, I think we could see as high as 50% of new equipment purchased in 2025 as “CPO-based.” Past 2025, I think the adoption, at least from my perception of looking at our deployments and our transitions that we’re looking at, will be a pretty consistent transition. The only question for us is on our metro links. Where we’re going to be using 400 ZR, do we continue to use faceplate pluggables? Do we look at an onboard optics system or what some people are calling near chip optics or whatever? Or are we going to go to some type of coherent embedded into a CPO type of environment? And I think unfortunately for coherent, the CPO aspect is a little more difficult and so it probably doesn’t make sense to build a system with half the ports CPO and the other half faceplate pluggable when the rest of your data center has already gone CPO. We’ve got to find some way to have these whole systems built in a contained environment where the optics are already pre-populated on these systems. Eventually past 2025, you won’t be buying it with open ports on it on those systems. You’ll be buying it fully populated ready to go.

Rob Stone: I’m not brave enough to volunteer a number but what I would say is it’s in a particular market segment, right? So inside of our data center within the server connectivity or the endpoint connectivity realm it could go very high. But as Brad pointed out, there are other interfaces, longer distance interfaces, coherent and so forth. Also the smaller size data centers won’t need this level of integration for a while. So I think it really depends, but our goal is that by the time we get to 100 terabit switches we want to switch over 100% at least for the intra-data center links.

Tad Hofmeister: Ten percent. Let me also clarify that I assume you meant total addressable market, and you’re talking to probably the three leading adopters. Then there’s Tencent and Alibaba, AWS and others. If you look at the total volume of ports shipped, I think the rest of the market will lag by 3 or 4 years. Even if we three all are adopting it, from the overall market we represent probably 25-30% of the market.

Brad Booth: Honestly, I think Tad nailed it. There are plenty of other guys out there that question it. I know they’re all watching the CPO program. We hear from  them quite often. I would imagine they’re very interested in this, too, because they have a vested interest. I know some of them are using a mix of DAC and optics off of their servers today and are changing their topologies and looking at ways to improve their power usage, etc. Once an ecosystem’s available, if there is an ecosystem by 2025, then it does help accelerate the ability for multiple end users to adopt. Without a healthy ecosystem, it’s going to be pretty hard because you’re going to be dealing with supply chain issues and proprietary solutions potentially.

them quite often. I would imagine they’re very interested in this, too, because they have a vested interest. I know some of them are using a mix of DAC and optics off of their servers today and are changing their topologies and looking at ways to improve their power usage, etc. Once an ecosystem’s available, if there is an ecosystem by 2025, then it does help accelerate the ability for multiple end users to adopt. Without a healthy ecosystem, it’s going to be pretty hard because you’re going to be dealing with supply chain issues and proprietary solutions potentially.

Nathan Tracy: It’s good to see some common ground – power, cost, et cetera – amongst the three hyperscalers that we have represented today. Is there an area where there’s not an alignment and you want to try and bring everyone else on board?

Brad Booth: I think that’s interesting because we all do have very different applications that we run. What Facebook offers is very different than what Microsoft offers. Google and Microsoft compete on some level but on other levels we don’t. Our infrastructure is set up in a very specific way and we made a transition a number of years ago. Back in 2016, we made a conscious choice to make a transition of how we built our data centers. We moved from the campus style to the regional. And what’s been interesting is to hear Tad even talk about how their transitioning to that. Part of that is just the ability to grow that fast and be able to distribute your power footprint in a metro region. We’re going to have different views on it. The fact that Facebook, who has a very different operating model than Microsoft, yet we both are in agreement on co-packaged optics, and in talking to some of my other colleagues at Google and AWS, they’re interested in this. It’s a trend that’s going to happen, but how people build the networks is going to be interesting because we’re going to look at it more of what’s the SERDES rate? What’s the optical lambda rate? And making sure that that matches as well as possible so I don’t have to put retimers and gearboxes in my system. I would use my tier two switches to do the rate matching that we do. As Tad was saying, they look at driving and bringing in new bandwidth in that capability. We have different building models, we have different deployment models. But there is a conscious choice of how you want to build it and are we all going to be on the same page? No. A financial institution is on a very different page than the corporate environment that used to exist in the past and then the enterprise. That’s what’s going to happen a little bit with hyperscalers. There’s going to be some similarities but there’s also going to be some differences and be prepared for that. But if we have the flexibility in the specifications to allow us to build that as we wish, then I think everyone’s going to be a winner because we’re going to be building off of the same core technologies.

Tad Hofmeister: I feel like with Google you can’t describe everything we’re doing in one talk, and I imagine the same is true for Microsoft and Facebook. Whereas you can identify differences there, I think we’re all so large that there is overlap. I don’t know what I would need to convince Rob or Brad of that there’s not a part of Microsoft or Facebook already doing it.

Rob Stone: I’m actually kind of surprised that given the personality of the different organizations and the different services that we offer, that we do have as much commonality as we do. And I think it’s actually healthy for the industry because then the industry doesn’t need to build three different things for three different hyperscalers. And that leads to economies of scale and all the good things that we enjoy. So I think it’s important that we continue to collaborate as we are doing, and there will be differences. I don’t think it’s my place to tell Brad or Tad what their organizations should be doing. I also think you don’t want us all completely aligned. If we were all completely aligned and doing exactly the same thing, then there’d be no innovation and no competitiveness. By having us compete in some ways and innovate in other ways, it helps create these new solutions and new ideas.

To access TEF21: New Applications Driving Higher Bandwidths on demand, and all of the TEF21 on-demand content, visit the Ethernet Alliance website.